Top AI Infrastructure Companies: A Comprehensive Comparison Guide

Artificial intelligence (AI) is no longer just a buzzword; many businesses are struggling to scale models because they lack the right infrastructure. AI infrastructure comprises technologies for computing, data management, networking, and orchestration that work together to train, deploy, and serve models. In this guide, we’ll explore the market, compare top AI infrastructure companies, and highlight new trends that will transform computing. Understanding this space will empower you to make better decisions whether you’re building a startup or modernizing your operations.

Quick Summary: What Will You Learn in This Guide?

- What is AI infrastructure? A specialized technology stack—including computation, data, platform services, networking, and governance—that supports model training and inference.

- Why should you care? The market is growing rapidly, projected from $23.5 billion in 2021 to over $309 billion by 2031. Businesses spend billions on specialist chips, GPU data centers, and MLOps platforms.

- Who are the leaders? Major cloud platforms like AWS, Google Cloud, and Azure dominate, while hardware giants NVIDIA and AMD produce cutting-edge GPUs. Rising players like CoreWeave and Lambda Labs offer affordable GPU clouds.

- How to choose? Consider computational power, cost transparency, latency, energy efficiency, security, and ecosystem support. Sustainability matters—training GPT-3 consumed 1,287 MWh of electricity and released 552 tons of CO₂.

- Clarifai’s view: Clarifai helps businesses manage data, run models, and deploy them across cloud and edge contexts. It offers local runners and managed inference for quick iteration with cost control and compliance.

What Is AI Infrastructure, and Why Is It Important?

What Makes AI Infrastructure Different from Traditional IT?

AI infrastructure is built for high-compute workloads like training language models and running computer vision pipelines. Traditional servers struggle with large tensor computations and high data throughput. Thus, AI systems rely on accelerators like GPUs, TPUs, and ASICs for parallel processing. Additional components include data pipelines, MLOps platforms, network fabrics, and governance frameworks, ensuring repeatability and regulatory compliance. NVIDIA CEO Jensen Huang coined AI as “the essential infrastructure of our time,” highlighting that AI workloads need a tailored stack.

Why Is an Integrated Stack Essential?

To train advanced models, teams must coordinate compute resources, storage, and orchestration across clusters. DataOps 2.0 tools handle data ingestion, cleaning, labeling, and versioning. After training, inference services must respond quickly. Without a unified stack, teams face bottlenecks, hidden costs, and security issues. A survey by the AI Infrastructure Alliance shows only 5–10 % of businesses have generative AI in production due to complexity. Adopting a full AI-optimized stack enables organizations to accelerate deployment, reduce costs, and maintain compliance.

Expert Opinions

- New architectures matter: Bessemer Venture Partners notes that state-space models and Mixture-of-Experts architectures lower compute requirements while preserving accuracy.

- Next-generation GPUs and algorithms: Devices like NVIDIA H100/B100 and techniques such as Ring Attention and KV-cache optimization dramatically speed up training.

- DataOps & observability: As models grow, teams need robust DataOps and observability tools to manage datasets and monitor bias, drift, and latency.

What Is the Current AI Infrastructure Market Landscape?

How Big Is the Market and What’s the Growth Forecast?

The AI infrastructure market is booming. ClearML and the AI Infrastructure Alliance report it was worth $23.5 billion in 2021 and will grow to over $309 billion by 2031. Generative AI is expected to hit $98.1 billion by 2025 and $667 billion by 2030. In 2024, global cloud infrastructure spending reached $336 billion, with half of the growth attributed to AI. By 2025, cloud AI spending is projected to exceed $723 billion.

How Wide Is the Adoption Across Industries?

Generative AI adoption spans multiple sectors:

- Healthcare (47 %)

- Financial services (63 %)

- Media and entertainment (69 %)

Big players are investing heavily in AI infrastructure: Microsoft plans to spend $80 billion, Alphabet up to $75 billion, Meta between $60 – 65 billion, and Amazon around $100 billion. However, 96 % of organizations intend to further expand their AI computing power, and 64 % already use generative AI—illustrating the rapid pace of adoption.

Expert Opinions

- Enterprise embedding: By 2025, 67 % of AI spending will come from businesses integrating AI into core operations.

- Industry valuations: Startups like CoreWeave are valued near $19 billion, reflecting a strong demand for GPU clouds.

- Regional dynamics: North America holds 38.9 % of generative AI revenue, while Asia-Pacific experiences 47 % year-over-year growth.

How Are AI Infrastructure Providers Classified?

Compute and accelerators

The compute layer supplies raw power for AI. It includes GPUs, TPUs, AI ASICs, and emerging photonic chips. Major hardware companies like NVIDIA, AMD, Intel, and Cerebras dominate, but specialized providers—AWS Trainium/Inferentia, Groq, Etched, Tenstorrent—deliver custom chips for specific tasks. Photonic chips promise almost zero energy use in convolution operations. Later sections cover each vendor in more detail.

Cloud & hyperscale platforms

Major hyperscalers provide all-in-one stacks that combine computing, storage, and AI services. AWS, Google Cloud, Microsoft Azure, IBM, and Oracle offer managed training, pre-built foundation models, and bespoke chips. Regional clouds like Alibaba and Tencent serve local markets. These platforms attract enterprises seeking security, global availability, and automated deployment.

AI‑native cloud start‑ups

New entrants such as CoreWeave, Lambda Labs, Together AI, and Voltage Park focus on GPU-rich clusters optimized for AI workloads. They offer on-demand pricing, transparent billing, and quick scaling without the overhead of general-purpose clouds. Some, like Groq and Tenstorrent, create dedicated chips for ultra-low-latency inference.

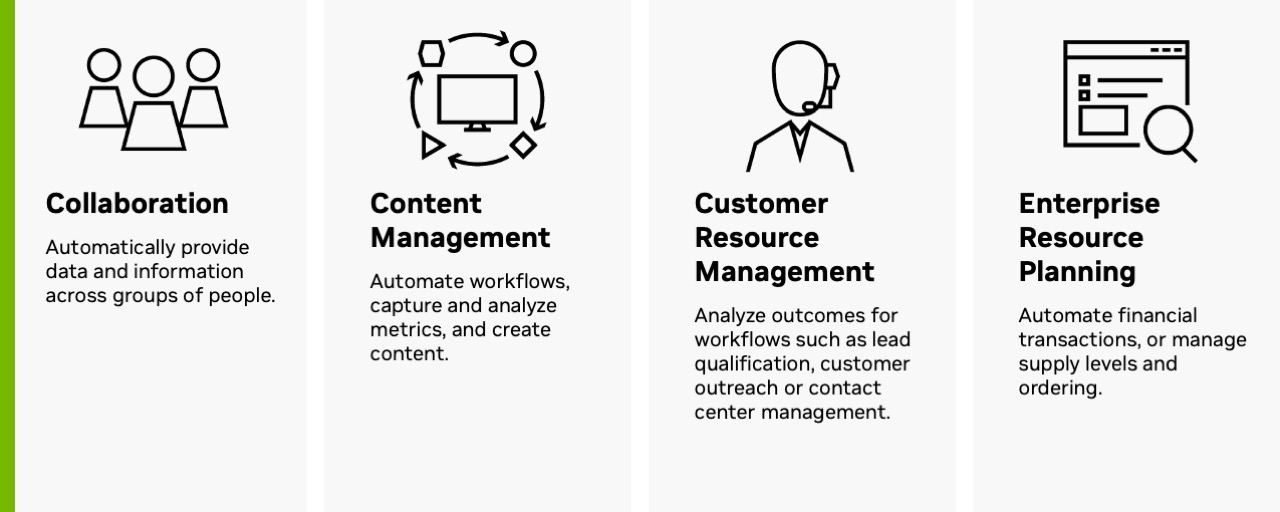

DataOps, observability & orchestration

DataOps 2.0 platforms handle data ingestion, classification, versioning, and governance. Tools like Databricks, MLflow, ClearML, and Hugging Face provide training pipelines and model registries. Observability services (e.g., Arize AI, WhyLabs, Credo AI) monitor performance, bias, and drift. Frameworks like LangChain, LlamaIndex, Modal, and Foundry enable developers to link models and agents for complex tasks. These layers are essential for deploying AI in real-world environments.

Expert Opinions

- Modular stacks: Bessemer points out that the AI infrastructure stack is increasingly modular—different providers cover compute, deployment, data management, observability, and orchestration.

- Hybrid deployments: Organizations leverage cloud, hybrid, and on-prem deployments to balance cost, performance, and data sovereignty.

- Governance importance: Governance is now seen as central, covering security, compliance, and ethics.

Who Are the Top AI Infrastructure Companies?

Clarifai:

Clarifai stands out in the LLMOps + Inference Orchestration + Data/MLOps space, serving as an AI control plane. It links data, models, and compute across cloud, VPC, and edge environments—unlike hyperscale clouds that focus primarily on raw compute. Clarifai’s key strengths include:

- Compute orchestration that routes workloads to the best-fit GPUs or specialized processors across clouds or on-premises.

- Autoscaling inference endpoints and Local Runners for air-gapped or low-latency deployments, enabling rapid deployment with predictable costs.

- Integration of data labeling, vector search, retrieval-augmented generation (RAG), finetuning, and evaluation into one governed workflow—eliminating brittle glue code.

- Enterprise governance with approvals, audit logs, and role-based access control to ensure compliance and traceability.

- A multi-cloud and on-prem strategy to reduce total cost and prevent vendor lock-in.

For organizations seeking both control and scale, Clarifai becomes the infrastructure backbone—reducing the total cost of ownership and ensuring consistency from lab to production.

Amazon Web Services:

AWS excels at AI infrastructure. SageMaker simplifies model training, tuning, deployment, and monitoring. Bedrock provides APIs to both proprietary and open foundation models. Custom chips like Trainium (training) and Inferentia (inference) offer excellent price-performance. Nova, a family of generative models, and Graviton processors for general compute add versatility. The global network of AWS data centers ensures low-latency access and regulatory compliance.

Expert Opinions

- Accelerators: AWS’s Trainium chips deliver up to 30 % better price-performance than comparable GPUs.

- Bedrock’s flexibility: Integration with open-source frameworks lets developers fine-tune models without worrying about infrastructure.

- Serverless inference: AWS supports serverless inference endpoints, reducing costs for applications with bursty traffic.

Google Cloud’s AI:

At Google Cloud, Vertex AI anchors the AI stack—managing training, tuning, and deployment. TPUs accelerate training for large models such as Gemini and PaLM. Vertex integrates with BigQuery, Dataproc, and Datastore for seamless data ingestion and management, and supports pre-built pipelines.

Insights from Experts

- TPU advantage: TPUs handle matrix multiplication efficiently, ideal for transformer models.

- Data fabric: Integration with Google’s data tools ensures seamless operations.

- Open models: Google releases models like Gemini to encourage collaboration while leveraging its compute infrastructure.

Microsoft Azure AI

Microsoft Azure AI offers AI services through Azure Machine Learning, Azure OpenAI Service, and Foundry. Users can choose from NVIDIA GPUs, B200 GPUs, and NP-series instances. The Foundry marketplace introduces a real-time compute market and multi-agent orchestration. Responsible AI tools help developers evaluate fairness and interpretability.

Experts Highlight

- Deep integration: Azure aligns closely with Microsoft productivity tools and offers robust identity and security.

- Partner ecosystem: Collaboration with OpenAI and Databricks enhances its capabilities.

- Innovation in Foundry: Real-time compute markets and multi-agent orchestration show Azure’s move beyond traditional cloud resources.

IBM Watsonx and Oracle Cloud Infrastructure

IBM Watsonx offers capabilities for building, governing, and deploying AI across hybrid clouds. It provides a model library, data storage, and governance layer to manage the lifecycle and compliance. Oracle Cloud Infrastructure delivers AI-enabled databases, high-performance computing, and transparent pricing.

Expert Opinions

- Hybrid focus: IBM is strong in hybrid and on-prem solutions—suitable for regulated industries.

- Governance: Watsonx emphasizes governance and responsible AI, appealing to compliance-driven sectors.

- Integrated data: OCI ties AI services directly to its autonomous database, reducing latency and data movement.

What About Regional Cloud and Edge Providers?

Alibaba Cloud and Tencent Cloud offer AI chips such as Hanguang and NeuroPilot, tailored to local rules and languages in Asia-Pacific. Edge providers like Akamai and Fastly enable low-latency inference at network edges, essential for IoT and real-time analytics.

Which Companies Lead in Hardware and Chip Innovation?

How Does NVIDIA Maintain Its Performance Leadership?

NVIDIA leads the market with its H100, B100, and upcoming Blackwell GPUs. These chips power many generative AI models and data centers. DGX systems bundle GPUs, networking, and software for optimized performance. Features such as tensor cores, NVLink, and fine-grained compute partitioning support high-throughput parallelism and better utilization.

Expert Advice

- Performance gains: The H100 significantly outperforms the previous generation, offering more performance per watt and higher memory bandwidth.

- Ecosystem strength: NVIDIA’s CUDA and cuDNN are foundations for many deep-learning frameworks.

- Plug-and-play clusters: DGX-SuperPODs allow enterprises to rapidly deploy supercomputing clusters.

What Are AMD and Intel Doing?

AMD competes with MI300X and MI400 GPUs, focusing on high-bandwidth memory and cost efficiency. Intel develops Gaudi accelerators and Habana Labs technology while integrating AI features into Xeon processors.

Expert Insights

- Cost-effective performance: AMD’s GPUs often deliver excellent price-performance, especially for inference workloads.

- Gaudi’s unique design: Intel uses specialized interconnects to speed tensor operations.

- CPU-level AI: Integrating AI acceleration into CPUs benefits edge and mid-scale workloads.

Who Are the Specialized Chip Innovators?

- AWS Trainium/Inferentia lowers cost per FLOP and energy use for training and inference.

- Cerebras Systems produces the Wafer-Scale Engine (WSE), boasting 850 k AI cores.

- Groq designs chips for ultra-low-latency inference, ideal for real-time applications like autonomous vehicles.

- Etched builds the Sohu ASIC for transformer inference, dramatically improving energy efficiency.

- Tenstorrent employs RISC-V cores and is building decentralized data centers.

- Photonic chip makers like Lightmatter use light to conduct convolution with almost no energy.

Expert Perspectives

- Diversifying hardware: The rise of specialized chips signals a move toward task-specific hardware.

- Energy efficiency: Photonic and transformer-specific chips cut power consumption dramatically.

- Emerging vendors: Companies like Groq, Tenstorrent, and Lightmatter show that tech giants are not the only ones who can innovate.

Which Startups and Data Center Providers Are Shaping AI Infrastructure?

What Is CoreWeave’s Value Proposition?

CoreWeave evolved from cryptocurrency mining to become a prominent GPU cloud provider. It provides on-demand access to NVIDIA’s latest Blackwell and RTX PRO GPUs, coupled with high-performance InfiniBand networking. Pricing can be up to 80 % lower than traditional clouds, making it popular with startups and labs.

Expert Advice

- Scale advantage: CoreWeave manages hundreds of thousands of GPUs and is expanding data centers with $6 billion in funding.

- Transparent pricing: Customers can clearly see costs and reserve capacity for guaranteed availability.

- Enterprise partnerships: CoreWeave collaborates with AI labs to provide dedicated clusters for large models.

How Does Lambda Labs Stand Out?

Lambda Labs offers developer-friendly GPU clouds with 1-Click clusters and transparent pricing—A100 at $1.25/hr, H100 at $2.49/hr. It raised $480 million to build liquid-cooled data centers and earned SOC2 Type II certification.

Expert Advice

- Transparency: Clear pricing reduces surprise fees.

- Compliance: SOC2 and ISO certifications make Lambda appealing for regulated industries.

- Innovation: Liquid-cooled data centers enhance energy efficiency and density.

What Do Together AI, Voltage Park, and Tenstorrent Offer?

- Together AI is building an open-source cloud with pay-as-you-go compute.

- Voltage Park offers clusters of H100 GPUs at competitive prices.

- Tenstorrent integrates RISC-V cores and aims for decentralized data centers.

Expert Opinions

- Demand drivers: The shortage of GPUs and high cloud costs drive the rise of AI data center startups.

- Emerging names: Other players include Lightmatter, Iren, Rebellions.ai, and Rain AI.

- Open ecosystems: Together AI fosters collaboration by releasing models and tools publicly.

What About Data & MLOps Infrastructure: From DataOps 2.0 to Observability?

Why Is DataOps Critical for AI?

DataOps oversees data gathering, cleaning, transformation, labeling, and versioning. Without robust DataOps, models risk drift, bias, and reproducibility issues. In generative AI, managing millions of data points demands automated pipelines. Bessemer calls this DataOps 2.0, emphasizing that data pipelines must scale like the compute layer.

Why Is Observability Essential?

After deployment, models require continuous monitoring to catch performance degradation, bias, and security threats. Tools like Arize AI and WhyLabs track metrics and detect drift. Governance platforms like Credo AI and Aporia ensure compliance with fairness and privacy requirements. Observability grows critical as models interact with real-time data and adapt via reinforcement learning.

How Do Orchestration Frameworks Work?

LangChain, LlamaIndex, Modal, and Foundry allow developers to stitch together multiple models or services to build LLM agents, chatbots, and autonomous workflows. These frameworks manage state, context, and errors. Clarifai’s platform offers built-in workflows and compute orchestration for both local and cloud environments. With Clarifai’s Local Runners, you can train models where data resides and deploy inference on Clarifai’s managed platform for scalability and privacy.

Expert Insights

- Production gap: Only 5–10 % of businesses have generative AI in production because DataOps and orchestration are too complex.

- Workflow automation: Orchestration frameworks are essential as AI moves from static endpoints to agent-based applications.

- Clarifai integration: Clarifai’s dataset management, annotations, and workflows make DataOps and MLOps accessible at scale.

What Criteria Matter When Comparing AI Infrastructure Providers?

How Important Are Compute Power and Scalability?

Having cutting-edge hardware is essential. Providers should offer latest GPUs or specialized chips (H100, B200, Trainium) and support large clusters. Compare network bandwidth (InfiniBand vs. Ethernet) and memory bandwidth because transformer models are memory-bound. Scalability depends on a provider’s ability to quickly expand capacity across regions.

Why Is Pricing Transparency Crucial?

Hidden expenses can derail projects. Many hyperscalers have complex pricing models based on compute hours, storage, and egress. AI-native clouds like CoreWeave and Lambda Labs stand out with simple pricing. Consider reserved capacity discounts, spot pricing, and serverless inference to minimize costs. Clarifai’s pay-as-you-go model auto-scales inference for cost optimization.

How Does Performance and Latency Affect Your Choice?

Performance varies across hardware generations, interconnects, and software stacks. MLPerf benchmarks offer standardized metrics. Latency matters for real-time applications (e.g., chatbots, self-driving cars). Specialized chips like Groq and Sohu achieve microsecond-level latencies. Evaluate how providers handle bursts and maintain consistent performance.

Why Focus on Sustainability and Energy Efficiency?

AI’s environmental impact is significant:

- Data centers used 460 TWh of electricity in 2022; projected to exceed 1,050 TWh by 2026.

- Training GPT-3 consumed 1,287 MWh and emitted 552 tons of CO₂.

- Photonic chips offer near-zero energy convolution, and cooling accounts for considerable water use.

Choose providers committed to renewable energy, efficient cooling, and carbon offsets. Clarifai’s ability to orchestrate compute on local hardware reduces data transport and emissions.

How Does Security & Compliance Affect Decisions?

AI systems must protect sensitive data and follow regulations. Ask about SOC2, ISO 27001, and GDPR certifications. 55 % of businesses report increased cyber threats after adopting AI, and 46 % cite cybersecurity gaps. Look for providers with encryption, granular access controls, audit logging, and zero-trust architectures. Clarifai offers enterprise-grade security and on-prem deployment options.

What About Ecosystem & Integration?

Choose providers compatible with popular frameworks (PyTorch, TensorFlow, JAX), container tools (Docker, Kubernetes), and hybrid deployments. A broad partner ecosystem enhances integration. Clarifai’s API interoperates with external data sources and supports REST, gRPC, and Edge run times.

Expert Insights

- Skills shortage: 61 % of firms lack specialists in computing; 53 % lack data scientists.

- Capital intensity: Building full-stack AI infrastructure costs billions—only well-funded companies can compete.

- Risk management: Investments should align with business goals and risk tolerance, as TrendForce advises.

What Is the Environmental Impact of AI Infrastructure?

How Big Are the Energy and Water Demands?

AI infrastructure consumes huge amounts of resources. Data centers used 460 TWh of electricity in 2022 and may surpass 1,050 TWh by 2026. Training GPT-3 used 1,287 MWh and emitted 552 tons of CO₂. Inference consumes five times more electricity than a typical web search. Cooling also demands around 2 liters of water per kilowatt-hour.

How Are Data Centers Adapting?

Data centers adopt energy-efficient chips, liquid cooling, and renewable power. HPE’s fanless liquid-cooled design reduces electricity and noise. Photonic chips eliminate resistance and heat. Companies like Iren and Lightmatter build data centers tied to renewable energy. The ACEEE warns that AI data centers could use 9 % of U.S. electricity by 2030, advocating for energy-per-AI-task metrics and grid-aware scheduling.

What Sustainable Practices Can Businesses Adopt?

- Better scheduling: Run non-urgent training jobs during off-peak periods to utilize surplus renewable energy.

- Model efficiency: Apply techniques like state-space models and Mixture-of-Experts to reduce compute needs.

- Edge inference: Deploy models locally to reduce data center traffic and latency.

- Monitoring & reporting: Track per-model energy use and work with providers who disclose carbon footprints.

- Clarifai’s local runners: Train on-prem and scale inference via Clarifai’s orchestrator to cut data transfer.

Expert Opinions

- Future grids: The ACEEE recommends aligning workloads with renewable availability.

- Transparent metrics: Without clear metrics, companies risk overbuilding infrastructure.

- Continuous innovation: Photonic computing, RISC-V, and dynamic scheduling are critical for sustainable AI.

What Are the Challenges and Future Trends in AI Infrastructure?

Why Are Compute Scalability and Memory Bottlenecks Critical?

As Moore’s Law slows, scaling compute becomes difficult. Memory bandwidth now limits transformer training. Techniques like Ring Attention and KV-cache optimization reduce compute load. Mixture-of-Experts distributes work across multiple experts, lowering memory needs. Future GPUs will feature larger caches and faster HBM.

What Drives Capital Intensity and Supply Chain Risks?

Building AI infrastructure is extremely capital-intensive. Only large tech firms and well-funded startups can build chip fabs and data centers. Geopolitical tensions and export restrictions create supply chain risks, delaying hardware and driving the need for diversified architecture and regional production.

Why Are Transparency and Explainability Important?

Stakeholders demand explainable AI, but many providers keep performance data proprietary. Openness is difficult to balance with competitive advantage. Vendors are increasingly providing white-box architectures, open benchmarks, and model cards.

How Are Specialized Hardware and Algorithms Evolving?

Emerging state-space models and transformer variants require different hardware. Startups like Etched and Groq build chips tailored for specific use cases. Photonic and quantum computing may become mainstream. Expect a diverse ecosystem with multiple specialized hardware types.

What’s the Impact of Agent-Based Models and Serverless Compute?

Agent-based architectures demand dynamic orchestration. Serverless GPU backends like Modal and Foundry allocate compute on-demand, working with multi-agent frameworks to power chatbots and autonomous workflows. This approach democratizes AI development by removing server management.

Expert Opinions

- Goal-driven strategy: Align investments with clear business objectives and risk tolerance.

- Infrastructure scaling: Plan for future architectures despite uncertain chip roadmaps.

- Geopolitical awareness: Diversify suppliers and develop contingency plans to handle supply chain disruptions.

How Should Governance, Ethics, and Compliance Be Addressed?

What Does the Governance Layer Involve?

Governance covers security, privacy, ethics, and regulatory compliance. AI providers must implement encryption, access controls, and audit trails. Frameworks like SOC2, ISO 27001, FedRAMP, and the EU AI Act ensure legal adherence. Governance also demands ethical considerations—avoiding bias, ensuring transparency, and respecting user rights.

How Do You Manage Compliance and Risk?

Perform risk assessments considering data residency, cross-border transfers, and contractual obligations. 55 % of businesses experience increased cyber threats after adopting AI. Clarifai helps with compliance through granular roles, permissions, and on-premise options, enabling safe deployment while reducing legal risks.

Expert Opinions

- Transparency challenge: Stakeholders demand greater transparency and clarity.

- Fairness and bias: Evaluate fairness and bias within the model lifecycle, using tools like Clarifai’s Data Labeler.

- Regulatory horizon: Stay updated on emerging laws (e.g., EU AI Act, US Executive Orders) and adapt infrastructure accordingly.

Final Thoughts and Suggestions

AI infrastructure is evolving rapidly as demand and technology progress. The market is shifting from generic cloud platforms to specialized providers, custom chips, and agent-based orchestration. Environmental concerns are pushing companies toward energy-efficient designs and renewable integration. When evaluating vendors, organizations must look beyond performance to consider cost transparency, security, governance, and environmental impact.

Actionable Recommendations

- Choose hardware and cloud services tailored to your workload (training, inference, deployment). Use dedicated chips (like Trainium or Sohu) for high-volume inference; reserve GPUs for large training jobs.

- Plan capacity ahead: The demand for GPUs often exceeds supply. Reserve resources or partner with providers who can guarantee availability.

- Optimize sustainability: Use model-efficient techniques, schedule jobs during renewable peaks, and choose providers with transparent carbon reporting.

- Prioritize governance: Ensure providers meet compliance standards and offer robust security. Include fairness and bias monitoring from the start.

- Leverage Clarifai: Clarifai’s platform manages datasets, annotations, model deployment, and orchestration. Local runners allow on-prem training and seamless scaling to the cloud, balancing performance, cost, and data sovereignty.

FAQs

Q1: How do AI infrastructure and IT infrastructure differ?

A: AI infrastructure uses specialized accelerators, DataOps pipelines, observability tools, and orchestration frameworks for training and deploying ML models, whereas traditional IT infrastructure handles generic compute, storage, and networking.

Q2: Which cloud service is best for AI workloads?

A: It depends on the needs. AWS offers the most custom chips and managed services; Google Cloud excels with high-performance TPUs; Azure integrates seamlessly with business tools. For GPU-heavy workloads, specialized clouds like CoreWeave and Lambda Labs may provide better value. Compare compute options, pricing transparency, and ecosystem support.

Q3: How can I make my AI deployment more sustainable?

A: Use energy-efficient hardware, schedule jobs during periods of low demand, employ Mixture-of-Experts or state-space models, partner with providers investing in renewable energy, and report carbon metrics. Running inference at the edge or using Clarifai’s local runners reduces data center usage.

Q4: What should I look for in start-up AI clouds?

A: Seek transparent pricing, access to the latest GPUs, compliance certifications, and reliable customer support. Understand their approach to demand spikes, whether they offer reserved instances, and evaluate their financial stability and growth plans.

Q5: How does Clarifai integrate with AI infrastructure?

A: Clarifai provides a unified platform for dataset management, annotation, model training, and inference deployment. Its compute orchestrator connects to multiple cloud providers or on-prem servers, while local runners enable training and inference in controlled environments, balancing speed, cost, and compliance.