A business has two options if they want to grow their data analytics capabilities: developing an in-house data analytics team or making use of managed analytics services. Multiple companies prefer the traditional first option, believing that growing an in-house team is a safe choice. Undoubtedly, this strategy can be powerful, still it’s associated with certain challenges. Having looked back at my practical experience, I’ve outlined a typical profile of a company growing their in-house analytics team and picked out 3 challenges such companies are likely to face in the process.

A typical profile of a company that is to grow their in-house analytics team

Meet Prod&Sell, a large manufacturer and retailer, which is currently present in 15 states. Although the company has a data analytics team, they lag behind Prod&Sell’s evolving analytics needs. While Prod&Sell’s C-suite and business units expect reliable and timely reporting, self-service and predictive analytics (to name a few things), the analytics team still collects data from disintegrated sources, cleans it and prepares reports manually.

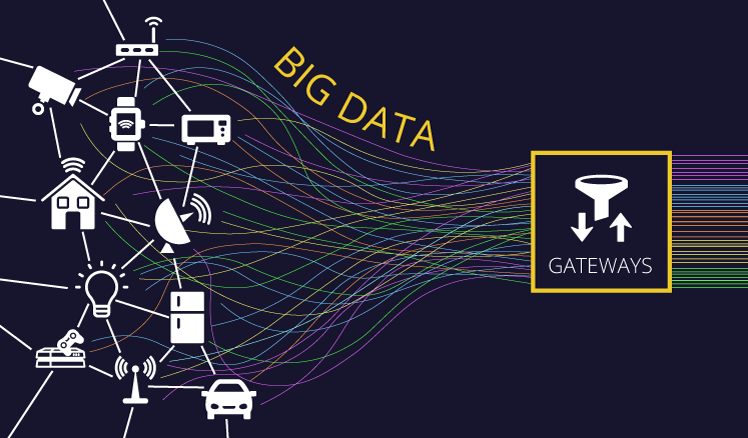

To satisfy the company’s analytical needs, the C-suite opts for creating a business intelligence solution with embedded big data analytics and data science capabilities. Colin, the Head of Prod&Sell’s data analytics, is charged with the task to further develop his department to implement this ambitious project.

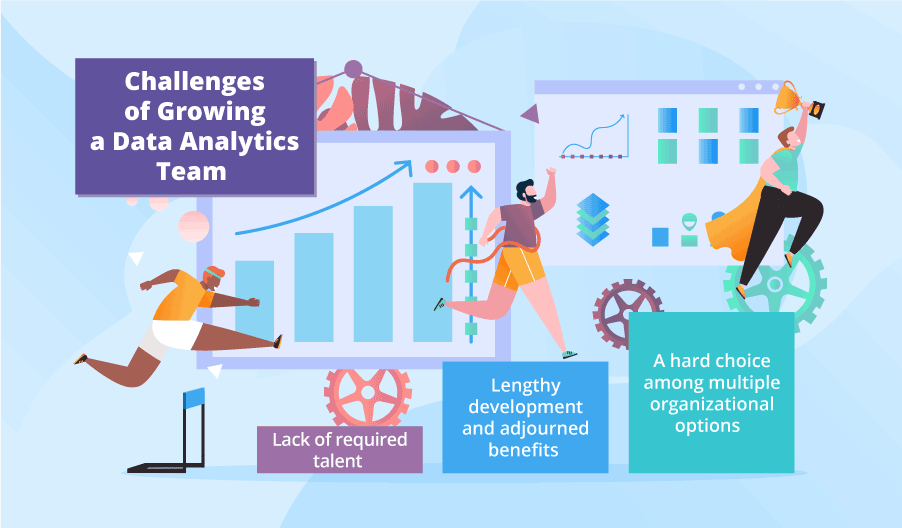

Challenges of developing an in-house data analytics team

Here are the challenges that are ahead of Prod&Sell and Colin:

Lack of required talent

First, Colin has to build up a team with mastery in many domains:

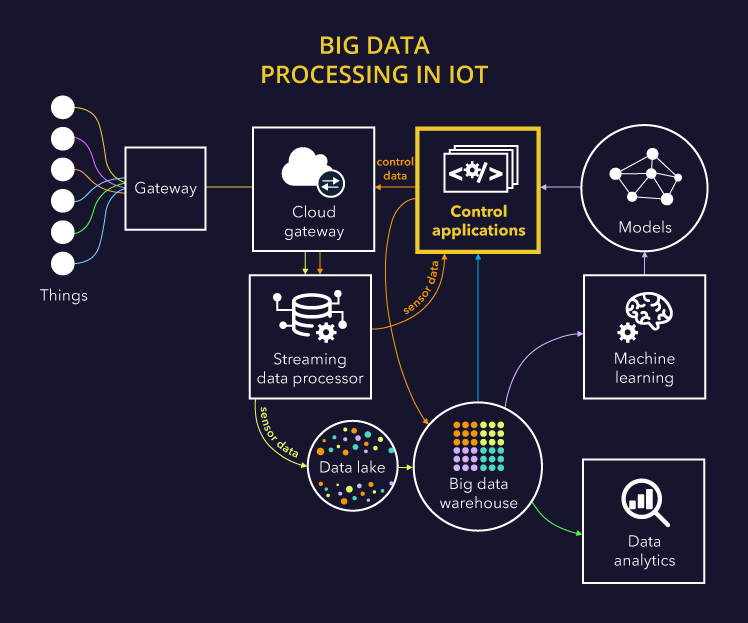

- BI and big data: to design and implement a data lake, a data warehouse, OLAP cubes, reports and dashboards, as well as administer the implemented solution on a daily basis.

- Data science: to design machine learning models and tune their hyperparameters, train and retrain them, and deal with noise reduction.

- Data quality and data security: to set up and automate a data quality management process and ensure role-based access to data.

- Business analysis: to elicit the needs of different business units and departments.

Taking into account that big data and data science skills are in short supply, it will take Colin many months to find all the required roles to fill in the talent gaps.

Lengthy development and adjourned benefits

It may take Prod&Sell about 2 years to develop the solution with all the analytic capabilities they want. This presupposes a long transitional period when the data analytics team will have to split their efforts between continuing with the existing practices and elaborating on new ones. Even if Colin’s team smartly chooses among available software development life cycle models, they will be able just to shorten the transitional period, not to get rid of it. This means that routine tasks will anyway retard the achievement of strategic goals, which seems to make the efforts on the data analytics team development futile. As a result, the C-suite may eventually decide to abandon the idea of further growth.

A hard choice among multiple organizational options

Prod&Sell will have to decide whether their analytics will be centralized or decentralized and, correspondingly, what the place of the altered analytics department will be in the organizational structure (what structural units it should be subordinate to – finance, marketing and sales, or each strategic business unit). Making a strategic mistake at this stage can result in the need for further restructuring and refocusing the analytics department in the future.

But overall, things aren’t that bad

Though the situation the article describes is rather gloomy, by no means I’m trying to dissuade you from developing an in-house analytics team. My message is ‘Be prepared for challenges and elaborate on the ways to overcome them before they happen’. For example, you can invite consultants to cover talent gaps and transfer knowledge or outsource a part of analytics, which promises most of the problems.

Request a ready-to-run service from ScienceSoft to start getting valuable insights within a couple of weeks. Data analytics expertise since 1989.