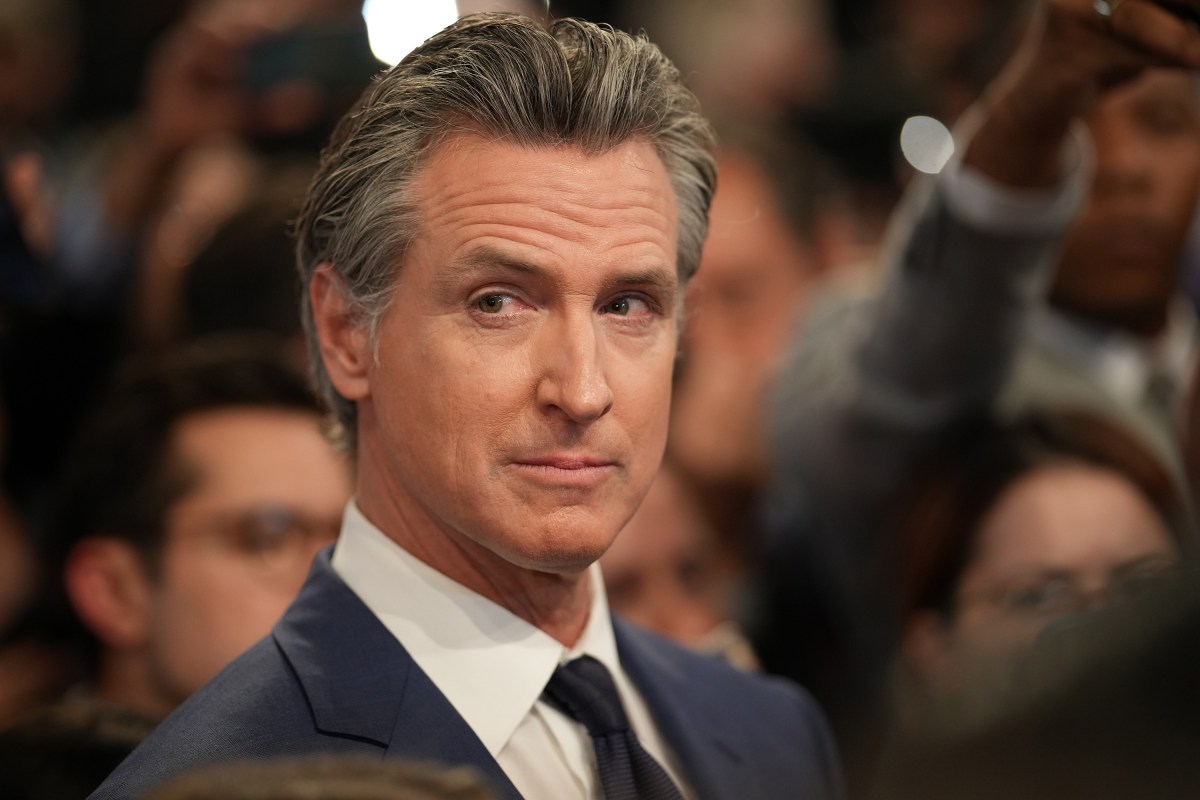

California Governor Gavin Newsom is currently considering 38 AI-related bills, including the highly contentious SB 1047, which the state’s legislature sent to his desk for final approval. These bills try to address the most pressing issues in artificial intelligence: everything from futuristic AI systems creating existential risk, deepfake nudes from AI image generators, to Hollywood studios creating AI clones of dead performers.

“Home to the majority of the world’s leading AI companies, California is working to harness these transformative technologies to help address pressing challenges while studying the risks they present,” said Governor Newsom’s office in a press release.

So far, Governor Newsom has signed eight of them into law, some of which are America’s most far reaching AI laws yet.

Deepfake nudes

Newsom signed two laws that address the creation and spread of deepfake nudes on Thursday. SB 926 criminalizes the act, making it illegal to blackmail someone with AI-generated nude images that resemble them.

SB 981, which also became law on Thursday, requires social media platforms to establish channels for users to report deepfake nudes that resemble them. The content must then be temporarily blocked while the platform investigates it, and permanently removed if confirmed.

Watermarks

Also on Thursday, Newsom signed a bill into law to help the public identify AI-generated content. SB 942 requires widely used generative AI systems to disclose they are AI-generated in their content’s provenance data. For example, all images created by OpenAI’s Dall-E now need a little tag in their metadata saying they’re AI generated.

Many AI companies already do this, and there are several free tools out there that can help people read this provenance data and detect AI-generated content.

Election deepfakes

Earlier this week, California’s governor signed three laws cracking down on AI deepfakes that could influence elections.

One of California’s new laws, AB 2655, requires large online platforms, like Facebook and X, to remove or label AI deepfakes related to elections, as well as create channels to report such content. Candidates and elected officials can seek injunctive relief if a large online platform is not complying with the act.

Another law, AB 2839, takes aim at social media users who post, or repost, AI deepfakes that could deceive voters about upcoming elections. The law went into effect immediately on Tuesday, and Newsom suggested Elon Musk may be at risk of violating it.

AI-generated political advertisements now require outright disclosures under California’s new law, AB 2355. That means moving forward, Trump may not be able to get away with posting AI deepfakes of Taylor Swift endorsing him on Truth Social (she endorsed Kamala Harris). The FCC has proposed a similar disclosure requirement at a national level and has already made robocalls using AI-generated voices illegal.

Actors and AI

Two laws that Newsom signed on Tuesday — which SAG-AFTRA, the nation’s largest film and broadcast actors union, was pushing for — create new standards for California’s media industry. AB 2602 requires studios to obtain permission from an actor before creating an AI-generated replica of their voice or likeness.

Meanwhile, AB 1836 prohibits studios from creating digital replicas of deceased performers without consent from their estates (e.g., legally cleared replicas were used in the recent “Alien” and “Star Wars” movies, as well as in other films).

What’s left?

Governor Newsom still has 30 AI-related bills to decide on before the end of September. During a chat with Salesforce CEO Marc Benioff on Tuesday during the 2024 Dreamforce conference, Newsom may have tipped his hat about SB 1047, and how he’s thinking about regulating the AI industry more broadly.

“There’s one bill that is sort of outsized in terms of public discourse and consciousness; it’s this SB 1047,” said Newsom onstage Tuesday. “What are the demonstrable risks in AI and what are the hypothetical risks? I can’t solve for everything. What can we solve for? And so that’s the approach we’re taking across the spectrum on this.”

Check back on this article for updates on what AI laws California’s governor signs, and what he doesn’t.