Every year, there are nearly 30,000 new products introduced to the market, with a staggering 95% rate of failure. A big portion of those products is made by startups and small product design companies, but even internationally recognized names aren’t always immune from NPD (New Product Development) fiasco. Remember the Google Glass project, which received millions of dollars in investment but quickly vanished from the conversation? Perhaps the uncomfortable backlash from the New Coke during the mid-1980s is still in memory, too. Even the multinational oral hygiene powerhouse, Colgate, had to taste the bitter experience of a bust with its Kitchen Entrees line.

Big companies could bounce back from an NPD debacle, but many of their less fortunate counterparts struggled to even afford the chance to try again. Failed products don’t just vanish; they leave behind companies whose brands and reputations are indefinitely tarnished. Not only does a product failure drag down the financial report, but it also costs the company momentum and likely the rare opportunity to establish a market position.

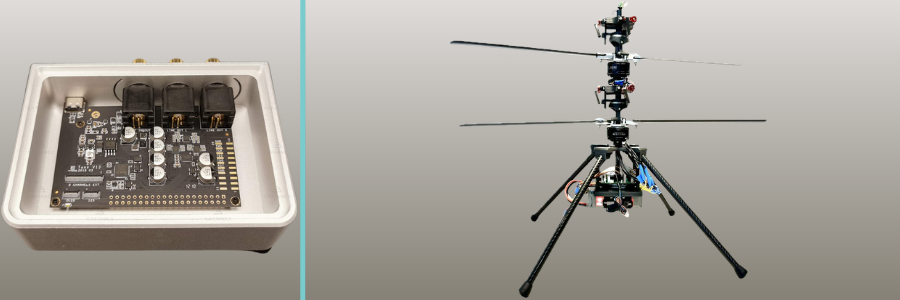

This is why concept testing is a crucial phase in an NPD process. At the end of the concept generation step, you probably end up with a dozen or more concept designs. Because it makes little financial sense to try to develop every single one of them all the way to the prototyping stage, you have to pick only one concept that actually warrants the resource allocations for further development. While choosing between competing concept designs isn’t always an exact science, there’s definitely something you can do to minimize your chances of becoming part of the harrowing statistics.

Concept testing consists of a series of purposeful steps to help you gather the product’s marketability data from end-users. In general, the data should tell what the target demographics like and dislike about the product, how it compares with competitors, why some consumers want the product while others avoid it, and whether the product presents an obvious room for improvement. As simple as it may sound, there’s no guarantee that the data you gather at the end of the testing will point to any particular concept. The data still has to be scrutinized and interpreted for it to be useful.

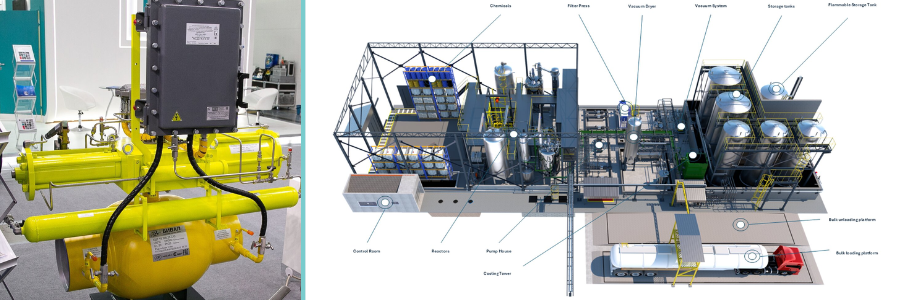

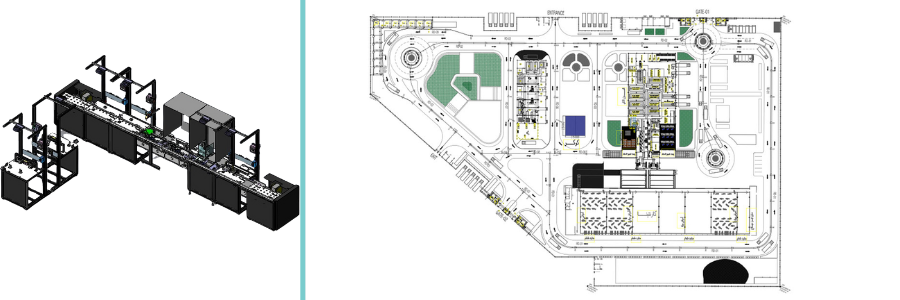

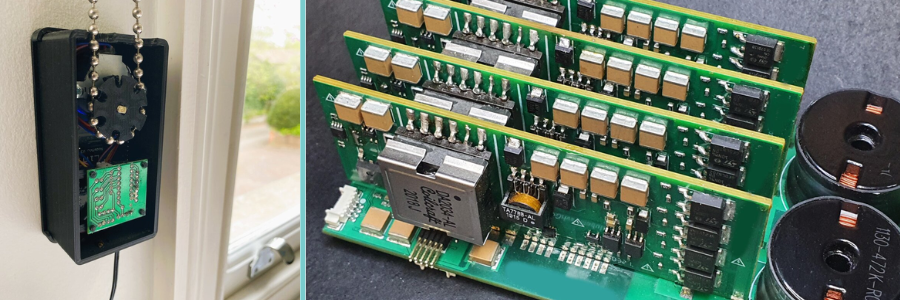

Given the complexities of formulating the test procedures, deciding which methodology to use, and determining which participants should take part in the testing, it’s advisable to have the process done or at least assisted by NPD professionals. Cad Crowd is among the few freelancing platforms that specialize in hardware product design and engineering design services, where you can connect and collaborate with strictly vetted, tried-and-true, seasoned industrial designers experienced in concept generation and testing. With client-friendly hiring options and robust IP protection services backed by more than 15 years of experience, Cad Crowd is a reliable one-stop shop used by companies big and small to outsource any and all stages of hardware product development. The platform itself can function as a project manager if you want, bridging communication and providing quality control to make sure that your concept testing process is handled only by the best-qualified talents to guarantee accurate results.

🚀 Table of contents

Concept testing vs. product testing

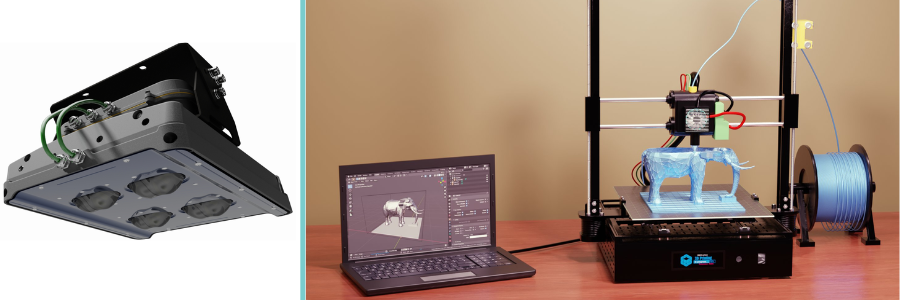

The primary purpose of concept testing is to evaluate the market viability of product designs while they are still in the conceptual stage. You don’t have a product yet at this point, as it has not been fully developed. The evaluation is meant to validate ideas early on in the NPD process when there’s still enough time to revise, improve, add, and discard most of the concepts being tested. As the evaluation concludes, you should end up with the most feasible concept, allowing you to allocate resources to further develop it. Concept testing must involve representatives of the target demographic (and in some cases, experts) giving their opinions on such subjects as potential for demand, perceived values, likely pain points, performance expectations, and so forth.

On the other hand, product testing implies that you already have an almost-finished product that has undergone some rounds of prototyping followed by small-volume manufacturing. The product is approaching its full market launch timeline, but you want to make sure that everything works as intended before it hits store shelves. Since the number of units is relatively small (from the pilot production), product testing is likely done by a small number of respondents, such as certification issuing organizations, a third-party panel of experts, focus groups, and beta testers.

It’s worth mentioning that concept testing isn’t a form of marketing campaign for your consumer product design firm, either. You’re not sending the concepts for people to invest money in the NPD project or persuade them to make a purchase once the product is ready.

RELATED: How CAD turns your idea into a prototype for CAD design companies & freelance services

Choosing the one right concept design

Say you’re developing a new hardware product. The concept generation phase gives you about a dozen or so potential designs, each with its own strengths and weaknesses. Based on technical feasibility, development cost, time-to-market schedule, and certification requirements, you narrow the selections down to half a dozen options. A possible issue with a patented design comes up, forcing you to remove another concept from the list. You have five remaining concepts available, and all of them seem to be promising enough. But you only have the resources to fully develop one product. So, how can you be sure that you’ll pick the right one? Concept testing by survey, and here’s how to do it properly.

Define clear objectives

Just like the beginning of market research, always start by defining exactly what you want to learn from the testing. Avoid vague objectives such as evaluating multiple concepts or gathering feedback from potential consumers, as they canlead to poorly executed research at best and inconclusive results at worst. You want the respondents to give specific answers about the concepts, so it’s only appropriate to throw around some specific questions as well. For example:

- What do you think is good and bad about the concept?

- How does the concept compare to other products you’ve already used before?

- What features do you like the most?

- Which design element is the worst in your opinion?

- Is there any specific thing that makes you want this concept?

- What are the main reasons that you wouldn’t use this concept?

- On a scale of 1–10, how pleased are you with the concept?

- What kind of improvements do you expect to see?

- What features do you use the most?

- Does the product feel ergonomic enough?

Let the things you want to know about the concepts (from the respondents) guide you through every decision, from formulating the questions to selecting the proper methodology. When you focus on specific questions, it increases your chances of acquiring coherent, decipherable answers rather than scattered pieces of responses to sort through. Narrow-focused answers make it easier for concept design experts to run the results analysis later, too.

Involve the right participants

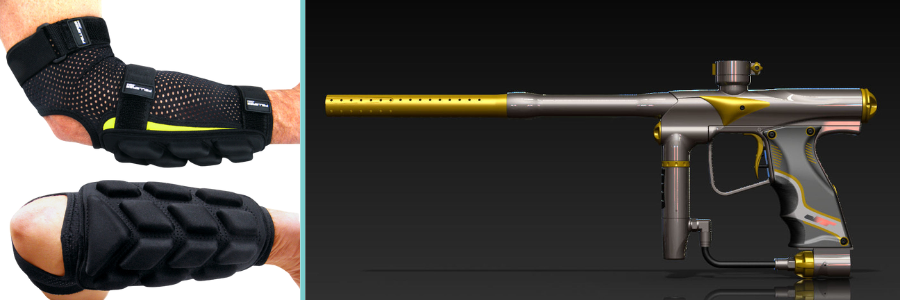

If product testing is supposed to be a requirement for regulatory compliance and a real-world performance simulation as a form of final quality control, concept testing is all about asking the respondents for their opinions about a hypothetical new product. The keyword here is “hypothetical” because the product is yet to be materialized. All you have at this point are some concept designs, and you are in need of feedback from potential end-users.

In concept testing, respondents should primarily consist of consumers from the target market; you may also include expert users, even if they don’t belong to the same demographic. If you’ve launched a hardware product before and the new version is meant to expand your market, keep in mind that the current customers may react differently from the prospects when they’re exposed to the same concepts. Among the biggest causes of failure in concept testing are randomly chosen participants, for example, people who may never realistically buy or use the product. Their answers only dilute the insights gained from the real target market, further complicating an already complex process.

It’s advisable to recruit 150-200 respondents from each segment of the target demographic. You need to strike the right balance between speed and statistical strength, aiming to discover actionable insights and build decision-making confidence (concept selection) without dragging testing out longer than necessary.

RELATED: Top 10 benefits of reverse engineering services at product design & development companies

Testing methodology

There are four major methods commonly used for concept testing. It’s not uncommon to use a combination of two or more methods to gain as objective and reliable an insight as possible for product development experts.

Monadic: Each participant is presented with a single concept design to elicit an in-depth opinion, reducing the risk of comparison bias. Given the nature of the method, the data collected at the end of the process likely reflects respondents’ immediate reactions to a concept rather than their relative preferences. It won’t tell you why they chose any particular concept over another. That being said, my onadic survey is an excellent option for any of the following purposes:

- Evaluation of an innovation with no direct comparison benchmark.

- A review of a concept that requires a detailed demonstration.

- Feedback generation on every aspect of a concept design.

In some cases, the monadic method is chosen for the simple fact that comparison bias is irrelevant to the survey result. For instance, the concept is to be developed as a direct competitor of an existing product (there will be comparison bias, but you don’t want it to affect your decision). You already know that the concept shares more than enough similarities with the alternatives, and the survey is solely intended to gauge whether the concept receives favorable feedback. Obviously, a monadic survey isn’t an ideal method to help you choose from multiple concepts, unless you have two or more concepts being tested by different groups of respondents separately.

Sequential monadic: The same group of respondents evaluates multiple concepts, one at a time. Sequential monadic gives you the benefits of an in-depth concept evaluation of its monadic counterpart, added with the ability to pit multiple concepts against each other. For order bias control, you should divide the respondents into several subgroups; a different subgroup evaluates the concepts in a different sequence, too. Among the best use cases of the method:

- Evaluation of 2 to 4 concepts, and you need an in-depth report of each.

- The feedback must include preference ranking.

- Statistical comparison among the concepts is required.

- The order of sequence in which you present the concepts may affect the objectivity or validity of the feedback.

Sequential monadic gives you a reasonable balance between detailed feedback and comparative preference in one go, making it an ideal method for budget-conscious concept design service and testing. While comparison bias is almost a given, the fact that a respondent can observe only one concept at a time can keep it to a reasonable minimum.

Comparative: Unlike with monadic and sequential monadic, where comparison bias might skew the results, you actually count on comparison bias when using the aptly called “comparative” testing method. If the goal is to put multiple concepts to the test and choose the most favorable one, this is probably the most straightforward way to do it. By allowing the respondents to do a direct comparison between competing concept designs, the data should be as unambiguous as they come. Best use cases of the comparative method:

- A survey to figure out the key differentiators between multiple concept designs (from customers’ viewpoints).

- Selecting the most customer-preferred design.

- Research into whether end-users pay attention to subtle differences in multiple concepts.

The comparative method makes sense because this is what customers typically do before making a purchase. They put competing products side-by-side to understand the similarities and differences in the hope of making a well-informed buying decision. Comparative testing is how you gather preference-ranking data and identify which specific design elements most influence buyers’ choices.

Of course, the survey should ask for more than a simple ranking system. Respondents should be given the option to explain why they favor one concept over the others, providing insights to inform refinements.

RELATED: How to improve product development for your company with engineering firms & design consultants

Protomonadic: A combination of monadic and comparative methods, protomonadic requires the respondents to evaluate the concepts in two phases. First, they evaluate the concepts individually and offer a detailed observation for each. In the second phase, they put the concepts side by side for direct comparison. Protomonadic is best used by design engineering experts for:

- Concept testing involves complex designs, where thorough observation is required before comparison.

- New product development research (to support investment decision).

- An in-depth look into how certain design elements affect relative preference.

Among the aforementioned methods, protomonadic is expected to provide the most comprehensive overview of a concept’s potential marketability. The test data should indicate whether respondents’ evaluations of individual concepts align with their comparative preferences. For example, “Concept A” receives high praise for its assortment of features, but the majority of respondents say that they’re more likely to purchase “Concept B” because it’s more user-friendly. This might signal that you need to make some design compromises for the final product.

Note: there’s no single best method for every concept design testing. If you have to choose between multiple concepts quickly, the sequential monadic can be the ideal option. To gain a better understanding of how buyers respond to innovation, the monadic method promises a detailed evaluation. When in-depth comparison data is necessary, protomonadic is a wise choice. Choose the testing methodology according to the objectives, and always consider such factors as the complexity of concept design and budget.

Result analysis

Now that the testing concludes, analyze the data and look for such findings as:

- Trends and patterns in concept selection among respondents

- How the demographic variations (age range, occupation, ethnicity, cultural backgrounds, etc.) affect relative preference

- Design elements with positive and negative feedback

- Surprises, or any unexpected responses

Based on the analysis, it should become more apparent how potential buyers perceive the value proposition of each concept, what features generate the highest purchase intent, and the biggest causes of concern that might hinder adoption. Everything comes down to the simple purpose of enabling data-driven concept selection by product engineering services. The testing helps you take out all the guesswork as you choose the most promising concept design for a product.

Why concept testing matters

The idea behind concept testing is to better understand how your target market responds to a new design that could address a long-standing unmet need or offer a better alternative to existing products. You need validation (from potential buyers) that one of the proposed concept designs will perform well in the market when it’s finally launched. This validation plays no small part in your attempt to:

- Save time and resources: when a concept gains positive feedback from the target market, you have the much-needed confirmation that further development is indeed worth pursuing. It’s best to validate the marketability of a concept as early as possible in an NPD project, so that you can focus on refining ideas that will actually work instead of churning out more design sketches with little feasibility, if any.

- Minimize risk of failure: no one wants to develop a product that hardly sells. Respondents’ answers and observations are highly valuable for determining the next step in the development process. Whether you decide to add more features or abandon any particular design element, you should be able to trace it to the concept testing result analysis. You might not be able to provide everything that the customers want, but you can certainly avoid giving them the features they dislike.

- Secure stakeholders’ investments: when presenting a new product concept to stakeholders (including investors), you need to back your claims of profitability with verifiable data. Concept design testing in which the respondents are representatives of the target market can make a strong case to encourage buy-in.

Furthermore, concept testing is a good measure to ensure product-market fit. While the main purpose of concept testing is indeed to select the most marketable design among many, the respondents’ answers also may reveal their preferences, needs, and pain points. Bear in mind that if the testing involves only your own concepts (without competitors’ products), the design that receives the strongest positive feedback isn’t necessarily a guarantee of market fit. It only means that the design is the best-reviewed of the bunch. But an insight into customers’ expectations helps you form the basis of a broader new product design service, which might include product positioning, marketing campaign, prioritization of affordability over versatility or portability, etc.

RELATED: From sketch to prototype with product design services for companies at Cad Crowd

The optimal and the adequate

It’s only natural that you want a clear-cut answer to everything, including matters of product design. In an ideal, simple world, selecting a concept is just a case of either/or; a concept is either good or bad, right or wrong, high-end or low-end, advanced or basic, and so forth. Everybody yearns for such simple, contrasting explanations because there’s a definitive line to separate one category from the other, leaving no room for confusion. Your target buyers also want the same thing, and so do your product designers. But the reality is that choosing among competing concept designs can be much more complex than that.

Not only do you evaluate every concept design against the problems it’s supposed to solve, but you also figure out how to deliver those solutions within the context of design constraints. Apart from the usual budget constraints, there may be challenges with fabrication methods, sourcing the right materials, securing reliable hardware component suppliers, or managing manufacturing costs.

And this brings us back to the concept testing data analysis mentioned above. You’ll find that certain design elements receive positive feedback, while others get nothing but crushing criticisms. There’s nothing wrong with that; in fact, the presence of both positive and negative reviews is an indication of concept design testing done right. In many cases, you see both high praise and harsh criticism directed toward the same concept. If you outright reject any concept that doesn’t receive complete and utter approval from the respondents, well then, you’re aiming for perfection, which unfortunately isn’t always a feasible objective to begin with. A perfect product doesn’t and can’t exist, at least not when you have to build it with all the various constraints that inevitably affect the development process and manufacturing design service effectiveness.

Choosing a concept isn’t a decision that revolves around the ideas of perfection and imperfection, but selecting one that you can develop into an optimal solution. Everybody has personal preferences, and there might be two or more solutions to the same problem. The keyword here is “optimal,” not “merely adequate,” because developing a concept into a product means optimizing the design to deliver practical solutions while maintaining strong market fit.

RELATED: What are proven product design principles when working with companies & freelancers?

Takeaway

Concept design testing within the context of a new product development is a lot more than just selecting between the right and the wrong or separating the good from the bad. It’s a process of discovery, where you’ll learn about customers’ preferences and what you can or should do to transform a mere concept into a design optimized for them in every use case scenario.

The notion of exposing potential buyers to multiple concepts early on in the development process in an attempt to gauge or rank design marketability sounds pretty straightforward indeed, but the reality is often the exact opposite. It takes some real planning and management to recruit the right respondents who represent every group in the target demographics and make sure that every question is framed in such a way to solicit useful answers and insightful feedback. Concept testing isn’t something you can do on a whim, and that’s where Cad Crowd comes in. Specializing in product design and development, the freelancing platform is populated with thousands of experienced project managers, industrial designers, engineers, prototype fabricators, and digital artists to handle even the most complex concept testing for hardware products.

Cad Crowd helps you streamline the whole process, from concept design presentation and respondent recruitment to method selection and data analysis. It doesn’t matter if you need a detailed evaluation of a single concept or comparative studies to choose between competing concepts; the professionals at Cad Crowd strive to provide accurate, unbiased, and valuable insights for your NPD project. Request a quote today.