At this year’s Google I/O conference, NVIDIA and Google Cloud are accelerating the work of more than 100,000 developers in the companies’ joint developer community, which provides curated learning paths, hands-on labs and events that help them build using the full-stack NVIDIA AI platform on Google Cloud.

Launched at Google I/O last year, the community brings together developers, data scientists and machine learning engineers who want to sharpen their AI skills on the latest NVIDIA and Google Cloud technologies.

New additions for the community are rolling out this year, including a learning path for using the JAX library on NVIDIA GPUs, a new NVIDIA Dynamo codelab focused on inference optimizations, as well as monthly developer livestreams.

Over the last year, the community has become a go‑to hub for AI builders using NVIDIA‑accelerated tools for data science and machine learning. The result has been production‑ready retrieval-augmented generation applications on Google Kubernetes Engine (GKE) and instrumenting observability for agent workloads.

These AI builders are also experimenting with new large language model research and prototyping hybrid on‑premises and cloud inference for real‑world use cases like sports analytics and enterprise data pipelines.

Building With Google DeepMind’s Gemma, NVIDIA Nemotron and Open Frameworks

NVIDIA and Google Cloud are equipping developers with learning resources and hands-on labs that combine NVIDIA libraries, open models and tools with Google Cloud’s AI platform — so they can build optimized, production‑ready AI applications faster.

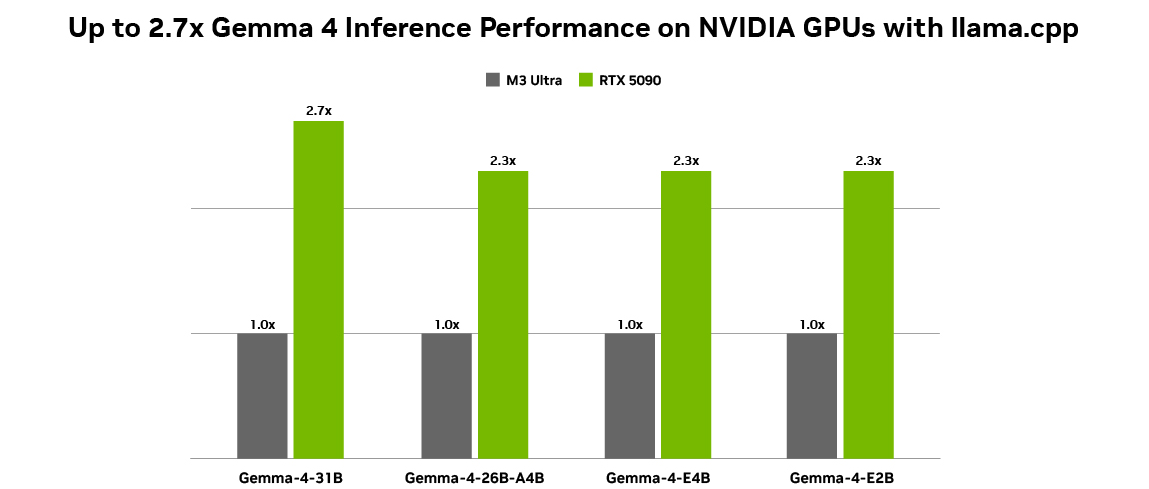

For example, developers can accelerate data science and analytics with the NVIDIA cuDF library in Google Colab Enterprise or Dataproc, or deploy multi-agent applications by combining Google DeepMind’s Gemma 4 models, NVIDIA Nemotron open models and Google Agent Development Kit with Google Cloud G4 VMs powered by NVIDIA RTX PRO 6000 Blackwell GPUs in Google Cloud Run or with spot instances.

NVIDIA and Google Cloud work closely across open frameworks like JAX so developers can build, scale and productize JAX workloads on NVIDIA AI infrastructure on Google Cloud — from single‑GPU experiments to multi‑rack deployments — while getting strong performance and a consistent experience.

This work extends to Google Cloud AI Hypercomputer, where the MaxText framework uses these JAX optimizations to train large models efficiently on NVIDIA GPUs.

Building on the same foundation, NVIDIA Dynamo on GKE helps developers optimize large-scale inference — including mixture-of-experts models — so they can serve AI applications more efficiently with NVIDIA accelerated infrastructure on Google Cloud.

To help developers get hands-on with these capabilities, a new learning path on running and scaling JAX on NVIDIA GPUs and a new NVIDIA Dynamo on GKE inference codelab will become available next month for members in the Google Cloud and NVIDIA developer community.

Advancing Responsible AI With Google DeepMind’s SynthID and NVIDIA Cosmos

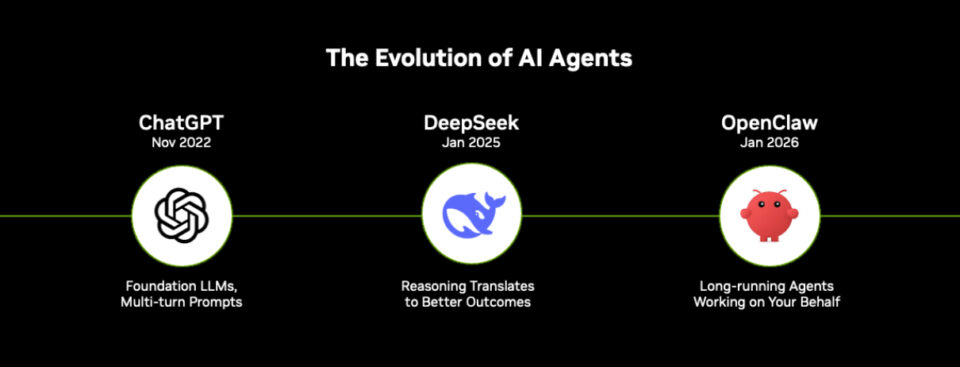

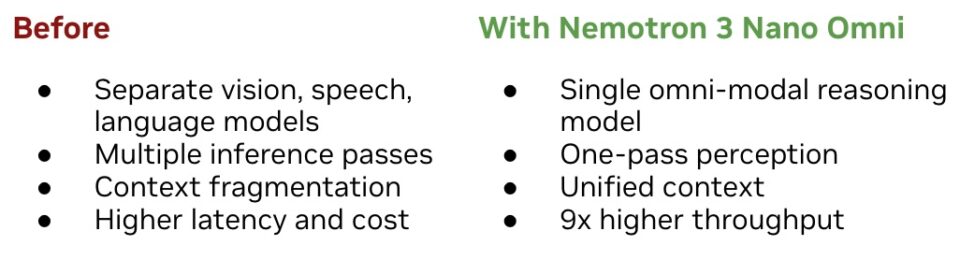

AI agents are increasingly built from a system of AI models — combining proprietary and open source models that reason, plan and act on users’ behalf.

Amid this shift, trust and transparency are foundational, so developers and organizations can understand how these systems work and what they generate.

NVIDIA was the first industry partner to collaborate with Google DeepMind on SynthID, an AI watermarking technology that embeds robust digital watermarks directly into AI‑generated content, which helps preserve the integrity of outputs from NVIDIA Cosmos world foundation models available on build.nvidia.com.

Cosmos models provide rich 3D perception and simulation capabilities for robots, autonomous machines and other physical AI systems, while SynthID brings content transparency to the imagery and video they rely on.

Together, they help preserve the integrity of AI‑generated content so developers can build and deploy agentic applications more responsibly across cloud, edge and real‑world environments.

Building on a Full-Stack NVIDIA and Google Cloud Platform

This year, Google I/O is putting the spotlight on new agentic experiences and tools for developers — and NVIDIA and Google Cloud are focused on ensuring builders have the infrastructure, software and learning resources they need to make the most of them.

For developers in the community building on NVIDIA and Google Cloud, the skills and tools they learn can scale, effortlessly taking projects from prototype to enterprise‑grade workloads.

At Google Cloud Next, Google Cloud and NVIDIA expanded their full‑stack platform to help developers train, deploy and operationalize agents on Google Cloud. This collaboration includes work on NVIDIA Vera Rubin-powered A5X instances, Google DeepMind Gemini models and more, and is being harnessed by leading AI labs and enterprises including OpenAI, Thinking Machine Labs, Schrodinger, Salesforce, Snap and Crowdstrike. Learn more in this blog.

Join the NVIDIA and Google Cloud developer community to connect with other builders and stay up to date on new tools, developer events and programs.